Two Years of Real AI Development

Two years. Three tools. One honest progression.

Beyond the influencer hype claiming "10x developer speed," here's what actually happens when you use the tools that matter: GitHub Copilot, Cursor, and Claude Code.

The Three Tools That Actually Matter

Most people using AI productively aren't boasting on social media. The hype comes from influencers riding the wave to harvest eyeballs. Real developers use AI for discussing ideas, brainstorming architecture, validating code, documentation, code reviews, and automating daily tasks.

Here's my honest progression through the only three tools that moved the needle:

August 2023-2024: GitHub Copilot Era (1 Year)

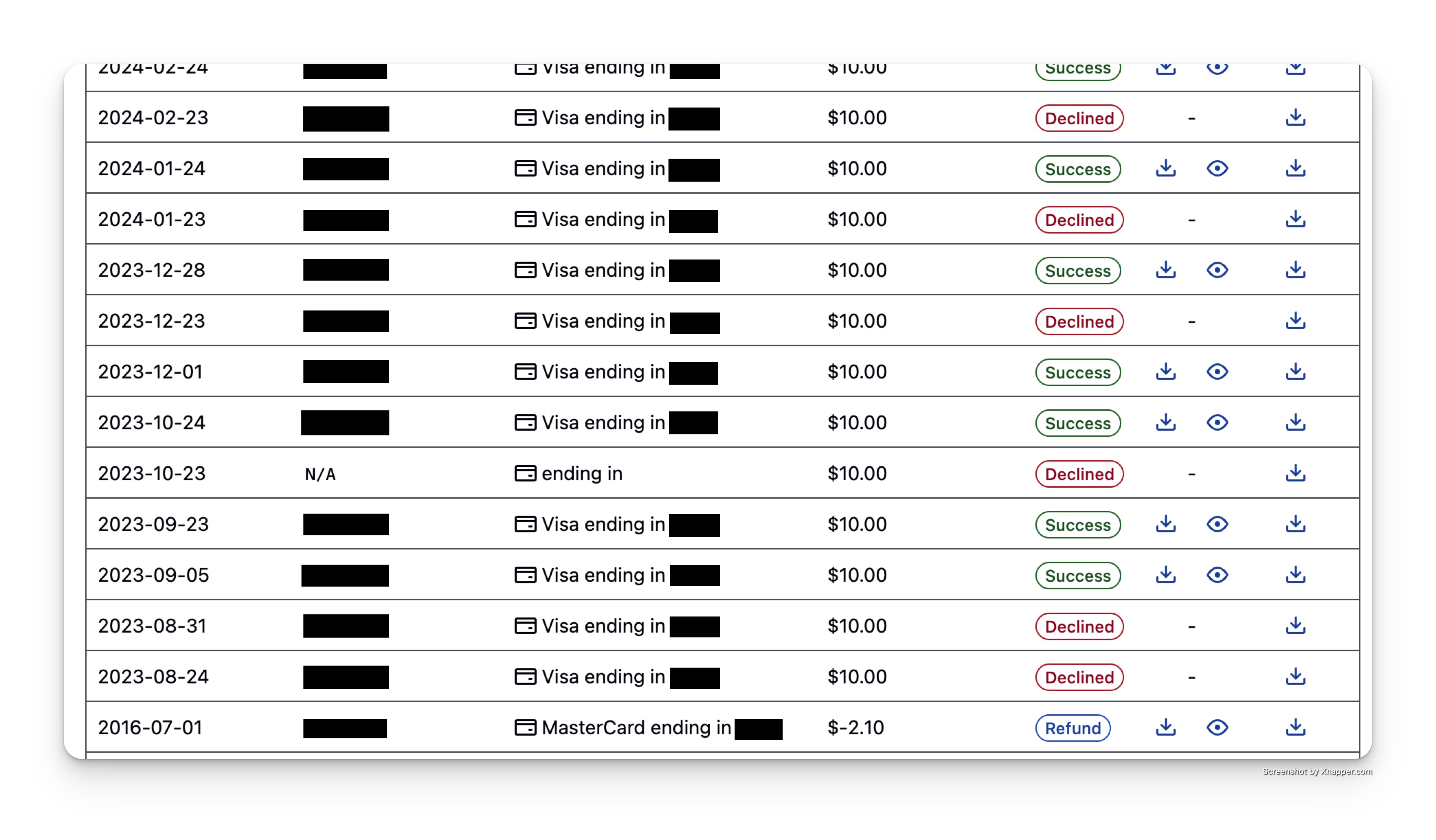

Started with GitHub Copilot in my IDE. Used it consistently for a full year, paying $10/month since August 2024.

Reality: Great for autocomplete and boilerplate, but fundamentally limited to line-by-line suggestions. Good starting point, not a game changer.

September 2024-May 2025: Cursor Era (7-8 Months)

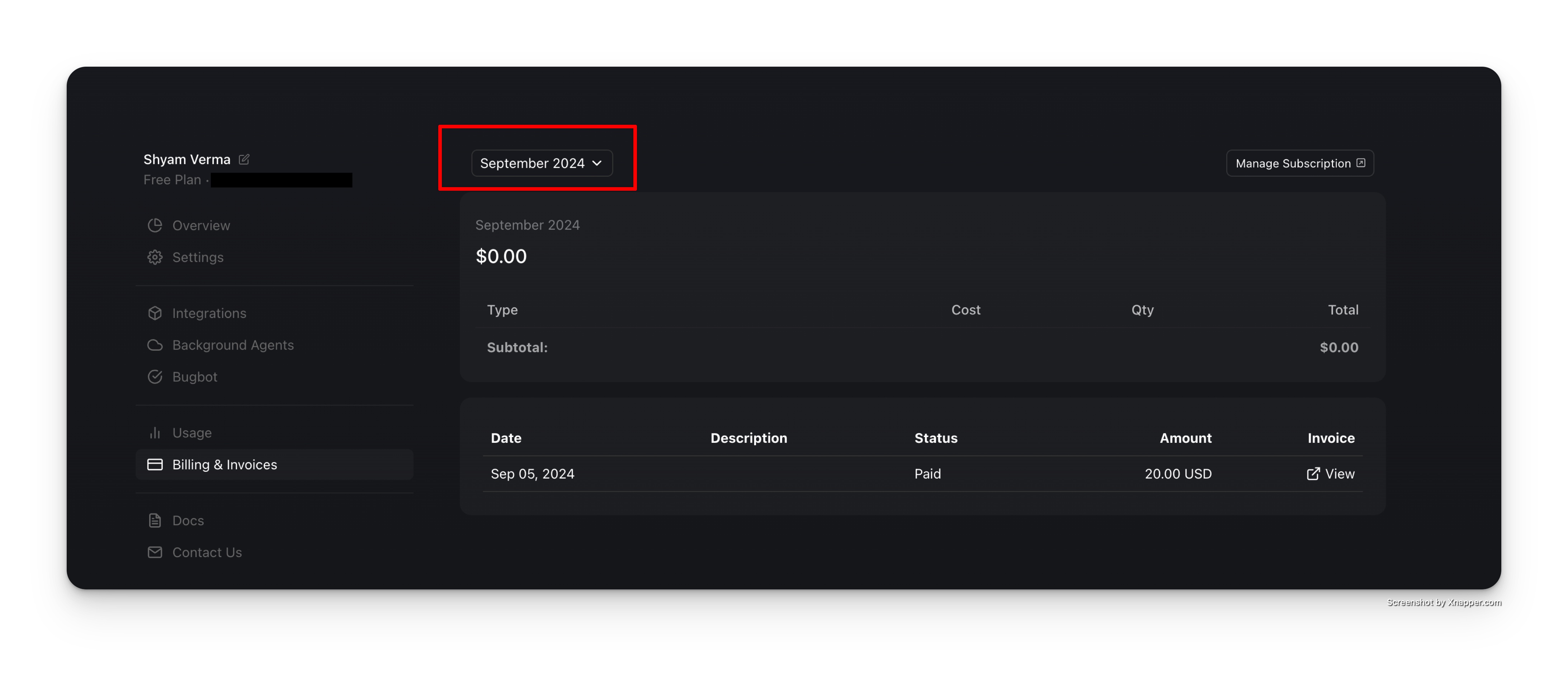

Switched to Cursor on September 5, 2024. This was a significant upgrade from Copilot.

Why it worked: Context-aware editing across entire codebases. Finally felt like pair programming with an AI that understood the bigger picture. But I was still driving - AI was the assistant.

Jan-June 2025: The Docker Container Problem

I develop inside Docker containers, but AI tools run on the host machine. They could edit code in containers but couldn't run commands, execute tests, or run scripts inside the Docker environment.

The MCP Issue: When MCP (Model Context Protocol) was introduced in November 2024, it became crucial for AI development workflows. But I had no way to run MCP tools from inside containers. AI tools were essentially blind to the actual development environment where code needed to run.

June 2025-Present: Claude Code Era

Complete switch to Claude Code. First AI tool that works seamlessly in my Docker container development environment.

Why it's different: True pair programming experience. I actively monitor code as it's written, discuss architecture, validate decisions, and debug complex problems together.

The Container Solution: Claude Code runs inside the container, not on the host machine. It can execute commands, run tests, access MCP tools, and work with the actual development environment where code runs. No more host/container disconnect.

The dynamic flipped. Now AI writes the code and I guide it, instead of the other way around.

August 2025: GitHub Copilot Cloud Reality Check

Tested GitHub Copilot Cloud for automated GitHub issues. Results were disappointing - it would venture into unknown territory without breaks, creating unhelpful changes without context.

Current use: Only useful for code reviews. For actual development work, Claude Code remains superior. Turns out automation without monitoring creates more problems than it solves.

The Reality Check: 2x Speed, Not 10x

Current Setup: Claude Code Max subscription with MCP, SubAgents, and Commands and Rules.

Actual Speed Gain: 2x faster development, not the mythical 10x claimed by influencers.

What AI Actually Does:

- Active Code Monitoring: Like pair programming - I watch and guide while AI writes

- Architecture Discussions: Brainstorming and validating design decisions

- Code Review: Catching issues and suggesting improvements

- Documentation: Generating and maintaining project docs

- Command Execution: Running tests, scripts, and build processes in the actual dev environment

- Development Automation: Build scripts, deploy scripts, dev setup, Docker configurations

- Data Processing: Cleaning unstructured data, creating import scripts, analyzing logs

- Internal Tooling: Bash automations, internal dashboards, development utilities

- MCP Integration: Access to development tools and context that match where code runs

What AI Doesn't Do:

- Replace the mental work of deciding WHAT to build

- Eliminate the need to understand HOW systems work

- Magically solve complex architectural problems

I'm skeptical of anyone claiming 10x gains. Maybe for boilerplate-heavy work, but real development still requires thinking through architecture and edge cases. AI amplifies what you already know - it doesn't replace the fundamentals.

Real AI Development in Practice

I now think in "Change Sets" instead of "File Edits" - AI helps implement entire features systematically rather than editing files one at a time.

But the cognitive load of architecture, debugging complex issues, and making strategic decisions? That's still remains human work (AI can help by bringing data for you to decide).